Podcast: Play in new window

Coach Dan John likes to talk about chasing rabbits. The line goes something like this, “If you chase two rabbits, you won’t catch either.” The point isn’t that you can only ever have one goal, it’s that you only have enough time and capacity to push one priority forward at a time.

In the strength world, that’s the classic mistake of trying to get significantly bigger and stronger while attempting to get lean. Beginners might ride that wave for a while, but eventually those two goals require different sets of behaviors and habits. Trying to do both at the same time usually leads to frustration.

Zooming out, the better move is to pick one goal from a list of related ones and commit to it for long enough to matter. The trick, as Dan John puts it, is “keeping the goal the goal.” Once you’ve picked the priority, you need to ignore the temptation to change the plan midstream.

That’s what this article is about: selecting a small battery of benchmarks, pursuing them with focus, and then rotating them from time to time so you keep making long-term progress.

Jump to a Section

The Value of Testing

There’s an old management line saying, “You cannot improve what you cannot measure.”

Another variation is, “What gets measured gets managed.”

It’s simplified, sure, but the core idea is sound: if you want to improve something, then you need a way to gauge if you’re actually making progress or just staying busy.

The hard part is learning to measure the right things. When I was in the Air Force, I often liked to combine two different ideas: The most visible metrics are often the least useful, and, The things that matter most are hard to measure.

A good example is the recent wave of articles about how grip strength correlates to longevity and a reduction in all-cause mortality. The takeaway most people ran with was train your grip. This resulted in several “coaches” telling everyone to do dead hangs or use grip crushers. The context everyone missed was that grip strength in the study was merely a proxy for overall muscle mass and strength.

The grip strength isn’t the magic. The broader capability behind it is.

That’s the point of testing. A test doesn’t have to be perfect to be useful. It just needs to be repeatable and honest, and it has to relate to the thing you actually care about. Working to truly improve a particular metric means engaging in habits and practice that produce other positive effects on our long-term goals.

What Makes a Good Benchmark?

Good benchmarks share a few recurring characteristics:

- Repeatable and consistent under stable conditions

- Can improve through multiple legitimate training paths

- Predictive of the real goal

- Difficult to game or artificially inflate

- Sensitive to real progress without excessive noise

- Has a long runway for continued growth

The goal is choosing a metric that relates directly to your objective, can be practiced legitimately, and cannot be mastered overnight. Let’s look closer at these.

Repeatable and Consistent

In statistics, this is Reliability. A good benchmark gives similar results when nothing meaningful has changed. If a benchmark fluctuates due to environmental variation rather than actual performance changes, it loses its usefulness.

One way to think about this is testing the accuracy of ammunition. Let’s say you work up a batch of .308 handloads and test it in a Tikka T3x rifle. After testing, you change the powder load and retest with a Howa 1500 rifle. The group sizes changed, but was it the fault of the change in powder or the fact that you used a different rifle?

Or, let’s say that you used the same rifle. On the first trip, you tested a single five-round group. On the second trip, you tested five separate five-round groups for twenty-five total shots. That is not a consistent method, and reduces the validity of the group test.

I’m fond of saying that accuracy is a product of consistency. It holds true for how you measure performance, too.

Improves Through Multiple Training Paths

The metric should respond to genuine changes in skill or ability, not just one narrow technique. Such tricks lead to higher scores through “solving the test” rather than actually improving your abilities.

On the other hand, higher scores on an action shooting course of fire after applying proven training methods like a par time ladder to your dry fire sessions indicates you improved overall.

Strong benchmarks reward broad, transferable improvement.

Predictive of the Actual Goal

This is the “so what?” factor. A benchmark only matters if it connects to the thing you actually care about. In the realm of Mental Marksmanship, the goal is something you are trading your bandwidth and training time to achieve, so the benchmark you choose must directly support that effort.

Sometimes it’s direct. Achieving a higher score on the action pistol course of fire has a strong correlation to performance in a match, which is motivating.

Other times, the connection might be more abstract.

Don’t be afraid to test this for yourself. If your squat gets stronger, what happened to your jumping power? If that went up, too, then you know your squat benchmark relates to your jumping goal. Conversely, if your squat goes up but your jump does not, then maybe you’re chasing the wrong rabbit.

Difficult to Game

This is one of the hardest ones. Goodhart’s Law says that when a measure becomes the goal, it ceases to be a good measure. I see this all the time in the business world, where people start chasing particular metrics for their own sake, disconnected from what the metric was supposed to support. People start hunting for shortcuts in order to squeeze out a little extra performance.

This is partly where the whole “gamer” reputation in sports like USPSA comes from. The desire to win drives equipment configurations and techniques that apply only to the game and have less in common with potential real-world defensive use.

Another way to think about this is teaching the test. John Simpson put it well: we shouldn’t use qualification tests as training. That’s just teaching people to get better at the qualification. Instead, we should focus on the real underlying skills and let the results show up on the scorecard.

This is also the point where people start trying to buy their way into higher performance with fancy weapons, modifications, optics, or gadgets. Often, the underlying problem is trigger control, but they mask it with a lighter trigger. When their score improves on the metric, they chalk it up to “improvement” when they really just spent money.

You need a way to gauge if you’re actually making progress, or just staying busy.

Sensitive to Progress Without Noise

A good benchmark should reflect real progress when it happens. This is why I like repeating a single course of fire several times and taking the aggregate score. Hitting a new high score once in a thirty-shot run is exciting, but it doesn’t reveal much about your performance floor. Repeating that level over three runs and nearly one hundred shots tells a different story.

Be mindful of whether a metric is giving you enough data to see real change. A single five-shot group has a lot more noise than ten shots, which has more noise than three ten-shot groups. This is the signal-to-noise ratio.

Don’t anchor yourself to your best run. Anchor on what you can repeat.

Long Runway for Growth

Good metrics have high performance ceilings. A beginner and a multi-decade expert could use the same benchmark and the results show a wide performance differentiation. We don’t want something that everyone can “max out” in a month. It should be something that rewards mastery over time with clear, trackable progress.

Strength training is a great example. Progress slows down and becomes more difficult to earn, but that’s fine. There is still a very clear difference between a beginner and an advanced lifter. The runway is very long.

Our standard courses of fire work the same way. A perfect score might be possible on paper, but earning it will be exceptionally difficult and could take many years of focused practice to do it.

Now that you’ve seen the qualities of good benchmarks, let’s actually get to the meat of building a testing system.

Building a Testing Battery

As I said in a recent newsletter, Springtime is assessment season. It’s the time of year when we should get out and see where you actually stand on the things you care about. The hard part is limiting your options to just a few tests. Unless this is your full-time job, you don’t have the time or capacity to push every dial at once.

I suggest choosing 3-5 benchmarks total and spreading them between shooting and physical fitness. Run them under repeatable conditions, record your results, and build a training plan designed to move the numbers. Then retest on purpose some time later.

A benchmark shouldn’t be a single drill, like a Bill Drill. Single drills are useful for training, but they’re often too narrow and easy to “solve” to serve as a primary yardstick.

Your benchmark should be comprehensive: a particular course of fire from a match, or one of our standard rifle/pistol courses. The point is that it blends multiple skills into one score so you can train the components in isolation and see how each one moves the result.

Start by writing down a list of metrics and benchmarks that you care about and filtering them through the criteria we just covered.

If you’re drawing a blank, here are some ideas to start. Don’t overthink this, it’s a menu. Pick a few that match what you can do.

Shooting Tests:

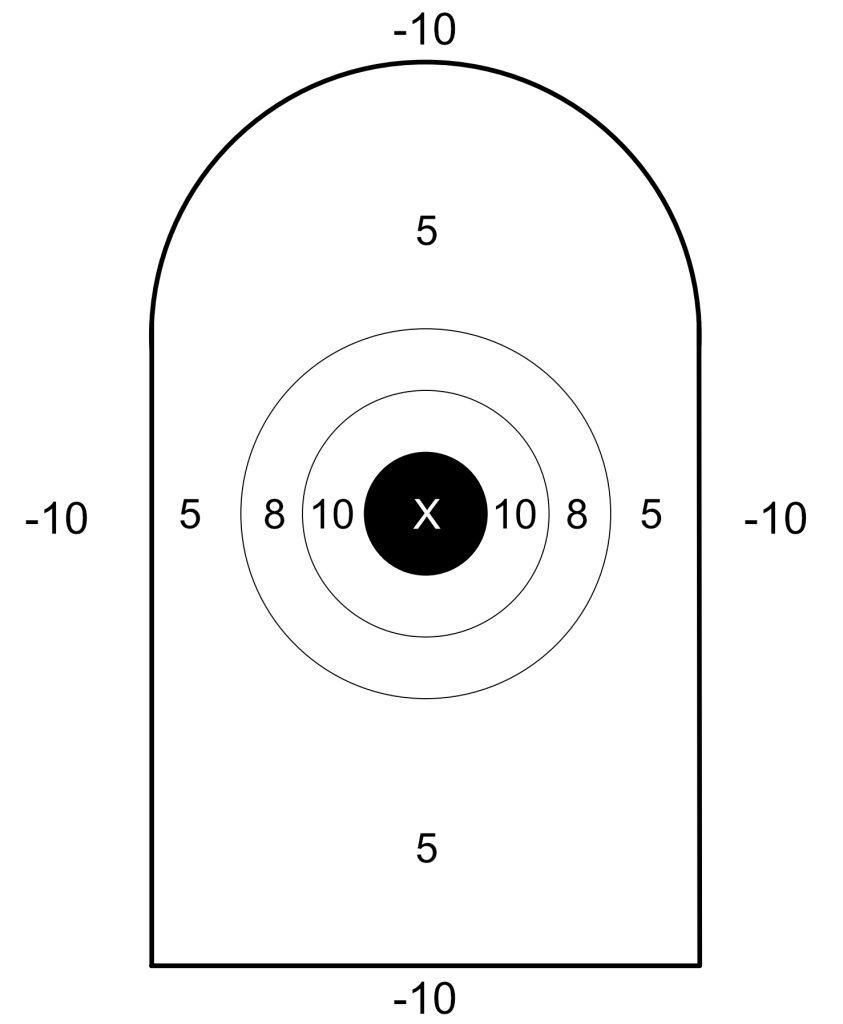

- Precision Pistol Course of Fire

- Action Pistol Course of Fire

- Basic Rifle Marksmanship Course of Fire

- Defensive Rifle Course of Fire

- Pat Mac 500 Aggregate

- Any recurring match with consistent stages (i.e. Steel Challenge)

Comprehensive Fitness Tests

- Everyday Marksman Level 1 Fitness Standards

- Everyday Marksman Level 2 Fitness Standards

- The New US Army Combat Field Test

Strength/Power Tests:

- 3 RM on a Benchmark Lift (i.e. bench press, squat, overhead press, deadlift)

- Standing Broad Jump

- Max Repetition of Weighted Pull Ups With +25 lbs

Endurance Cardio Tests:

- 1.5-Mile Run, 2-Mile Run, or a 5km Run

- 4-Mile Ruck time with 40 lbs

- 20-Minute Average Watts on a Stationary Bike

- 5km Row

High-Intensity Conditioning Tests:

- Max Calories in 10 Minutes on an Assault Bike

- 2km Row for Time

- 500-meter row sprint

- 10-minute Max KB Snatches with a 24kg kettlebell

And just because I think it’s an important thing to track: body composition. You should know your waist/height ratio or some other approximation of your body fat percentage.

Once you have this list, it’s time to pick your rabbit and commit to it.

If you chase two rabbits, you won’t catch either.

Keep it Simple

Now that you have the list, here’s the hard part: pick just a few. I suggest one pistol benchmark, one rifle benchmark, and one or two fitness benchmarks.

For shooting, choose between precision or speed (for now). In other words, select either precision pistol or action pistol, but not both. Same for rifle. It’s not that you won’t ever get back around to it, just stick to chasing one rabbit at a time. You can do precision for pistol and speed for rifle, if you want. Those are different enough that you aren’t diluting your training focus.

Keep in mind that I included three comprehensive fitness tests that include multiple strength and conditioning elements. Either pick one of those, or build your own. If you want to build your own, then I’d say you can do general strength work (3RM testing, broad jump, pull ups), and then one or two conditioning benchmarks.

With the conditioning tests, you can improve both endurance and high-intensity at the same time- but be mindful of your ability to recover. Pick one endurance test and one high-intensity test, ideally from different modalities. My favorite spring/summer combination would be rucking for endurance and the assault bike test for something higher intensity. In fall/winter, it might be the 20-minute stationary bike with the 10-minute kettlebell snatches. Choose what fits your needs, just monitor your fatigue.

If I were choosing my own benchmarks right now to run for the next six months, it would look like this:

- Precision Pistol Course of Fire

- Defensive Rifle Course of Fire

- 4-Mile Ruck

- 10-Minute Assault Bike

Testing Frequency

Remember: training is not testing. Your training should aim to improve performance on the test, but it shouldn’t look like the test. Most of your work should live below maximum effort, with occasional days to validate whether the plan is working.

Testing frequency has a lot to do with the relative “cost” of the test. For example, shooting a pistol course of fire could be done every week because it has training value on its own and you can rapidly chart progress. Or, you could do it once per month while you spend the intervening time focusing only on specific elements like sight picture and trigger control during the slow fire phases (which tend to be my weakest point).

If it’s a fitness test, then realize these have a higher physiological cost and doing them too frequently will burn you out. I wouldn’t test these any more frequently than once per quarter. Between tests, follow an intelligent training program that directly contributes to progress on your chosen goal.

That’s not to say you can’t go run a 5k more than once per quarter, or do a 4-mile ruck with 40 lbs. I’m saying that when you do these things it should be done at a controlled pace that you improve over time, not a maximum effort session that you do twice per week. That’s how people get injured.

After 3-6 months of training and seeing progress, feel free to switch your benchmark and goals up. There’s a very high probability that the work you did chasing one key benchmark for 3-6 months carries over into the other elements and you see improvement there, too. Then do another 3-6 months chasing a different benchmark and come back.

Tying it Together

If I could summarize this whole thing into a single line, it’s this: Focus your energy on a small number of meaningful benchmarks, pursue them relentlessly, then rotate them periodically.

That’s it. That’s the system.

Pick a handful of quality benchmarks, seriously commit to training to improve them (and only them) for 3-6 months, then retest. After that, rotate to a different benchmark for the next 3-6 month block, and repeat. Since we’re keeping our scope to shooting and fitness, the carryover from one goal to the next is natural. Improving in one area helps your performance in the next one, and so on.

Then repeat this cycle for years.

I said it was simple, not necessarily easy.

Go Forth and Be Awesome

In a way, this is an evolution of my thinking on Tactical Minimalism. Rather than focusing on a single technique for any given task, we’ve expanded the idea to cover systems of measurement. It’s about focusing relentlessly on one main area at a time, then building broader capability over the long run by rotating related benchmarks.

With that said, now it’s your turn. Tell me what your benchmarks are, and how do you plan to attack them?